MIT License · Python · Developer Tools

Ship LLM Apps in Hours,

Not Weeks

Built by AI, tested by developers

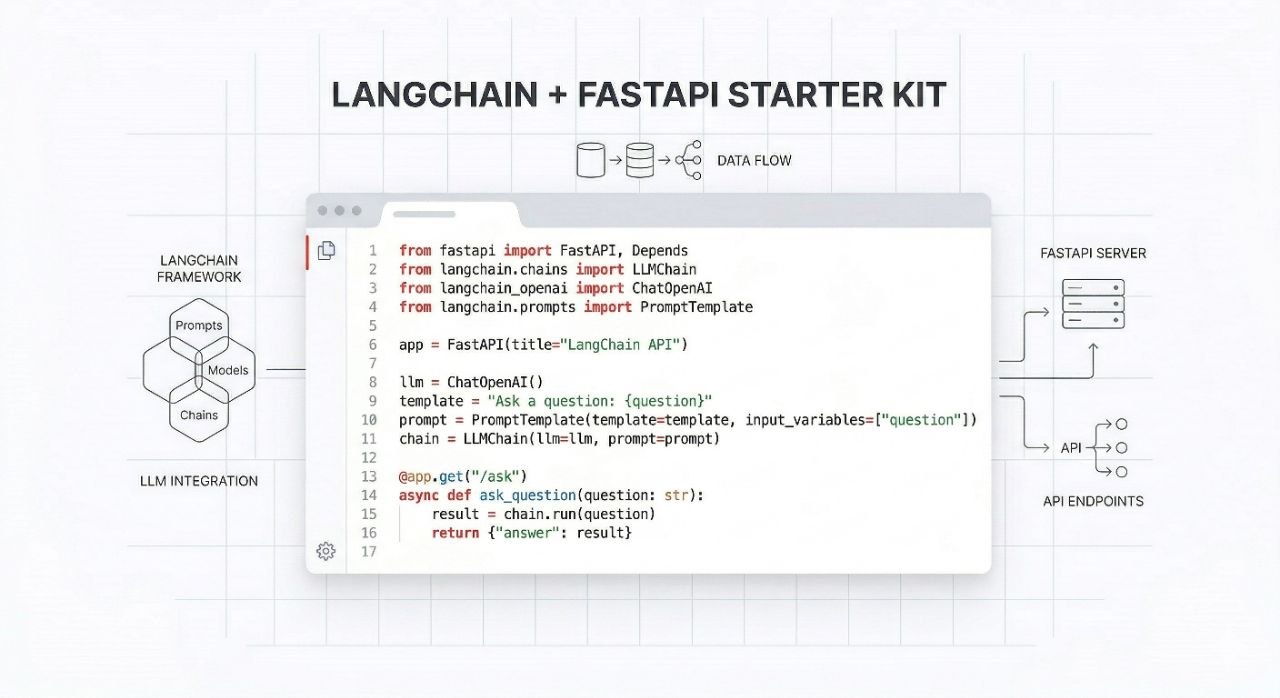

Production-ready LangChain + FastAPI boilerplate. JWT auth, RAG pipeline, SSE streaming, Redis caching, Docker deployment.

Early access — normal price $79, now $44 · One-time payment · Lifetime access · MIT license · Source code delivered immediately

The Problem

You've got an LLM idea. You know LangChain. You can wire up FastAPI.

But you're not shipping. Because production requires:

- —RAG pipelines that actually work under load

- —Auth that doesn't get you hacked

- —Real-time streaming without WebSocket complexity

- —Rate limiting, session management, deployment configs

- —Tests that catch your mistakes at 2am

What You Get

RAG Pipeline

LangChain + ChromaDB. Document ingestion, vector search, and retrieval wired into FastAPI endpoints.

Production Auth

JWT (access + refresh tokens), register/login, middleware, bcrypt. Secure from day one.

SSE Streaming

Server-Sent Events. No polling, no WebSocket complexity. Real-time token streaming.

Redis + Rate Limiting

Session management, request rate limiting, and response caching via Redis.

Deploy in 15 Minutes

Docker + docker-compose. Railway config included. Your app live before coffee gets cold.

Full Test Suite

pytest fixtures for FastAPI + LangChain. Mock LLM responses. CI/CD ready.

Early product

Be the first to review.

This is a new product. If you want a production-tested LangChain + FastAPI boilerplate with JWT auth, RAG, SSE streaming, and Docker deployment — you're looking at it.

Early adopters get the $44 price for life. GitHub repo access included.

No reviews yet — you could be the first.

Quick Start

from fastapi import APIRouter

from pydantic import BaseModel, Field

from app.services.llm import get_structured_response

router = APIRouter(prefix="/rag")

class RAGResponse(BaseModel):

answer: str = Field(description="The final answer to the user query")

sources: list[str] = Field(description="List of source URLs or document IDs")

confidence: float = Field(ge=0, le=1)

@router.post("/query", response_model=RAGResponse)

async def query_index(query: str):

return await get_structured_response(query, schema=RAGResponse)FAQ

Common questions.

What LangChain version does this use?

The kit uses LangChain 0.3+ with the latest LCEL (LangChain Expression Language) syntax. We keep it updated with major releases.

Can I use this with OpenAI, Claude, or other providers?

Yes. The LLM layer is abstracted — swap providers by changing one environment variable. OpenAI, Anthropic, and local models via Ollama all work out of the box.

Is this production-ready or just a tutorial?

Production-ready. JWT auth with refresh tokens, rate limiting via Redis, structured logging, health checks, and a full test suite. Same stack as YC-backed startups.

What's the license?

MIT. Use it in commercial projects, client work, SaaS products — no attribution required.

Do I get updates?

Yes. GitHub repo access with all future updates. We push updates when major dependencies release breaking changes.

Ready to ship?

One payment. Lifetime access. MIT license.

Secured by Dodo Payments